Feb 24, 2026 · 7 min read

Methodology notes

What is OT DataOps? Bringing Data Engineering to the Factory Floor

Why are your data scientists spending 80% of their time cleaning ugly PLC data? Learn how OT DataOps bridges the gap between raw machine noise and AI-ready datasets.

- Evidence level: Medium (field observations + public standards; not a universal benchmark).

- Measurement scope: Performance and economic outcomes vary by hardware, topology, workload shape, sampling profile, and process constraints.

- Primary references: IEC 62443, ISA-95 / IEC 62264, NIST SP 800-82r3.

- Implementation docs: Edge Architecture and Unified Namespace.

The Great AI Disappointment

Let's look at a familiar scenario in modern manufacturing: The executive board mandates that the company typically should become "AI-driven." They hire an expensive team of brilliant Data Scientists and Cloud IT Engineers.

The Data Scientists spin up their Jupyter Notebooks and connect to the factory's Data Lake, expecting to find beautifully structured datasets. Instead, they find this:

Tag ID: 49021. Value: 43. Timestamp: 12:00:01Tag ID: DB4.DBX2.1. Value: TRUE. Timestamp: 12:00:02Tag ID: VIB_01A. Value: ERR_COMM. Timestamp: 12:00:03

What is 49021? Is 43 degrees Celsius or Fahrenheit? What time zone is the timestamp in?

The reality of Industry 4.0 is that machine data is often ugly, noisy, and devoid of context. As a result, highly-paid Data Scientists end up spending a significant portion of their time performing repetitive data cleaning tasks-trying to match mysterious PLC tags to Excel spreadsheets-before they can write a single line of Machine Learning code.

When raw data lacks context, teams waste enormous resources on data cleaning. A Senior Data Scientist spending 80% of their time on data prep is a massive six-figure opportunity cost for the enterprise. OT DataOps moves data cleaning from data scientists to platform infrastructure-where it belongs.

This massive bottleneck is exactly what OT DataOps was built to solve.

Observed performance depends on workload shape, node capacity, and deployment design.

What is OT DataOps?

DataOps (Data Operations) is a concept originally born in the IT world. It focuses on automating the flow, quality, and delivery of data so that analytics teams can work faster.

OT DataOps (Operational Technology DataOps) takes those same principles and applies them to the unique, chaotic challenges of the factory floor. It is the automated discipline of extracting raw data from industrial assets (PLCs, SCADA, CNCs), cleaning it, filtering out the noise, adding business context, and delivering it securely to the people and systems that need it.

In short: OT DataOps is the assembly line for your data. It takes raw material (voltage signals) and turns it into a finished, valuable product (AI-ready insights).

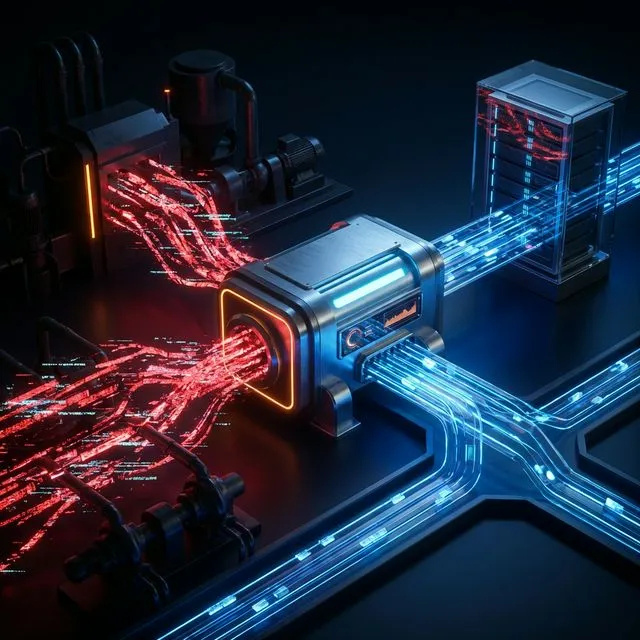

The 4 Stages of the OT DataOps Pipeline

Legacy PLC

Tag: DB4.DBX2.1

Proxus Edge

Contextualize to JSON

Deadbanding

Drop duplicates

Unified Namespace

Topic: Extruder/Temp

Cloud AI (Clean Data)

A mature OT DataOps strategy-powered by platforms like Proxus-automates four critical stages of data engineering:

Extraction (Connectivity)

You cannot analyze what you cannot connect to. A factory might have a brand new Siemens S7-1500 sitting next to a 25-year-old Modbus RTU controller. OT DataOps begins with a robust Edge Computing gateway that speaks hundreds of legacy industrial protocols, safely extracting the data without impacting the machine's primary control loop.

Normalization and Contextualization

This is the most critical step. Raw data typically should be translated into human-readable information before it leaves the factory. Instead of sending DB4.DBX2.1 = 120, an OT DataOps engine transforms the payload:

{

"asset": "Extruder_A",

"location": "Plant_Berlin",

"metric": "Temperature",

"value": 120,

"unit": "Celsius",

"status": "Warning"

}Now, when this payload hits the cloud, the Data Scientist immediately knows exactly what they are looking at.

Smart Filtering and Deadbanding

Cloud providers (like AWS or Azure) charge you for every gigabyte of data you upload (Ingress) and store. If a temperature sensor reports the same exact value (22.1°C) every 10 milliseconds, sending all of those duplicate records to the cloud is a massive waste of money. OT DataOps utilizes Smart Filtering technologies like Deadbanding (only sending data when the value changes by a certain percentage) or Time-based Aggregations (sending a 1-minute average instead of 60,000 raw millisecond points).

Delivery via Unified Namespace (UNS)

Finally, the clean, structured data is not dumped into a monolithic, unsearchable database. It is published to a central Unified Namespace (UNS). The UNS acts as an organized directory (like a file system) for the entire enterprise. Whether it is an ERP system calculating costs, or an AI Model running Model Context Protocol (MCP) queries, all systems consume data from a single, standardized source of truth.

The Business Impact of OT DataOps

- Faster AI & Analytics: Data scientists stop cleaning data and start building predictive maintenance models on day one.

- Reduced Cloud Costs: By filtering data at the Edge before it hits the cloud, enterprises routinely cut their cloud ingress and storage fees by 60% to 90%.

- Democratized Data: Plant managers no longer have to beg the IT department for a custom SQL report. Because the data in the UNS is already contextualized (e.g.,

Plant/Line1/OEE), anyone can build their own dashboards easily.

Conclusion

Building a modern, data-driven enterprise on a foundation of disorganized PLC tags is highly inefficient.

OT DataOps is the mandatory prerequisite for Industry 4.0. By shifting the burden of data engineering down to the Edge - cleaning, filtering, and organizing the data at the source - you unlock the true potential of your cloud analytics and AI investments.

When this may not be suitable

- Lower-frequency telemetry may not justify full distributed complexity.

- Small single-line plants may prefer simpler architectures first.

- Strict legacy constraints may require phased adoption.

- Safety-critical closed-loop control should remain in PLC/Safety PLC layers.

Outcomes depend on workload profile, hardware capacity, and deployment topology.

Frequently Asked Questions

How is OT DataOps different from IT DataOps?

IT DataOps optimizes software-generated data pipelines (databases, APIs, logs). OT DataOps handles machine-generated data from physical sensors and PLCs - dealing with proprietary protocols, real-time constraints, and environments where network outages are common. The Store and Forward pattern, for example, is an OT-specific resilience mechanism that has no IT equivalent.

What percentage of raw OT data is actually useful?

Typically 5–20%. Factory sensors often poll at high frequencies (50–100ms), but the value changes meaningfully far less often. Smart Filtering techniques like deadbanding and time-based aggregation can reduce transmitted data by 80–95% without losing actionable information.

Do I need a separate OT DataOps team?

Not necessarily. In most organizations, the existing automation/controls team collaborates with IT data engineers. The key is a shared namespace design (UNS) and clear ownership of the Edge-to-Cloud pipeline.

References

- DataOps Manifesto - Community-driven principles for agile data engineering that OT DataOps adapts to industrial environments. dataopsmanifesto.org

- ISA-95 / IEC 62264 - Standard defining the enterprise-control data model that structures the OT DataOps pipeline.

- Eclipse Sparkplug - Payload standardization framework for MQTT-based OT data normalization. sparkplug.eclipse.org

Learn how the Proxus IT/OT Bridge automates your DataOps Pipeline →